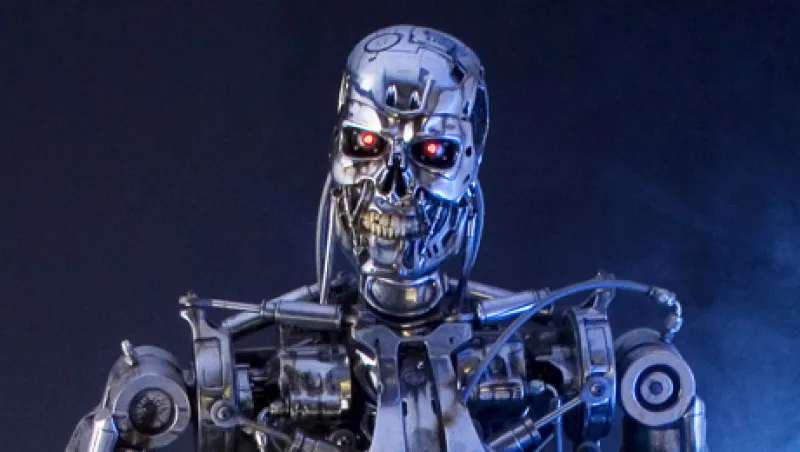

In James Cameron’s 1991 sci-fi classic, Terminator 2: Judgment Day, Arnold Schwarzenegger’s character — an autonomous humanoid robot assassin sent from the future to protect the boy who will later lead the rebellion against the computers controlling the world — enters a biker bar looking for a set of clothes and a motorcycle to start his mission. Featuring a head-up-display augmented-reality system, the Terminator can identify, among other things, the precise dimensions of every item of clothing worn by those in his field of vision. Simply by glancing at a burly biker, he sees sizing and other attributes flash before his android eyes: height, 6-foot-1; weight, 212 pounds. Were the film made today, an additional metric might have been projected on the Terminator’s retina: a real-time stock quote for the Harley-Davidson ticker, HOG.

It is impossible to know whether, during the ensuing bar fight, some intuition in his metal endoskeleton would have led the future-born cyborg to conclude that Harley-Davidson was oversold. What is certain is that we mortals are on the verge of a new era of digitally enabled complete situational awareness: wearable computing.

One experiment with wearable computing began in August 2013 at Fidelity Labs, the Boston-based financial services firm’s division for innovation and technology. Fidelity launched an experiment with Google Glass, a wearable computer developed by the tech giant that uses an optical head-mounted display controlled by voice commands and hand gestures to project information. Although Fidelity’s Google Glass app in its current stage is limited to displaying quotes from major indexes after markets close, a video published on the Fidelity Labs website shows the company’s vision for Market Monitor: the capability to access individual brokerage accounts, view market news and information, and obtain stock quotes in real time, on the move.

Accessibility of portfolio information on the go is merely the first dimension that wearable computing unlocks. Long restricted to military pilots and drop-down helmet visors filled with lime-green target boxes, wearable computing devices such as Google Glass might soon allow civilian users to scan their surroundings and identify targets — investment targets, that is.

Wearable head-up display systems will in the near future bring fundamental investment research into the physical world. Imagine glancing with a pair of smart glasses at a bar code or logo printed upon any of the myriad consumer products that you encounter and evaluate in the course of a normal day and instantly seeing in the corner of your eye a stock quote, price-earnings ratio and price history for the company that manufactures it.

With wearable computing you can use your own brain’s incredible capacity for pattern recognition and seamlessly integrate it with situational corporate awareness of the trends you see unfolding before your eyes.

How many times have you noticed products flying off the shelves right in front of you at your local mall or supermarket while competitor offerings positioned right next to them go unnoticed? What about that new model of car disproportionately showing up in parking lots or drawing admiring glances at stoplights? The natural next step in investment research would be to cross-reference all such everyday experiences against a head-up display system that could identify the corporations associated with those products on the fly and transmit real-time pricing, fundamental and earnings data on the companies that manufacture them. Imagine knowing, for example, that juice and energy-bar maker Odwalla is part of Coca-Cola Co. (ticker KO) and that razor maker Gillette Co. was purchased in 2005 by Procter & Gamble Co. (PG) just by having the logos of those brands fall into the field of vision of your glasses’ optical recognition system.

Eventually, smart glasses will be able to connect brands on billboard ads, static ads in magazines and even moving product images in commercials to visual databases of the companies that produced them. Getting a real-time stock quote on the companies behind a new ad campaign in Sports Illustrated or Vogue — or a Super Bowl commercial — that personally moves you or inspires new confidence in the direction of a brand will finally close the loop between the advertising and investing industries. When mediated by smart glasses, even socializing at a bar with friends after work can transform into a fundamental investment research opportunity as your wearable computing system scans the label on the bottle of the latest fashionable vodka and its brand-to-investing target recognition system informs you that, for example, Cîroc is an imprint of London-based beverage maker Diageo (DEO).

Such headset-enabled situational corporate awareness could be used to corroborate the visual experiences in front of you or highlight disconnects between the trends you observe in the consumption of a company’s products and the price movements of its shares. Such disconnects could raise the fascinating possibility that leading-edge trends in consumption you notice in your neighborhood mall or parking lot might not yet have been discounted into the price of the corresponding company’s stock. In other words, wearable computing might one day assist financial professionals in identifying time lags between real-world trends and market prices — and thus point out fundamental investment opportunities.

Internet-connected smart glasses with optical recognition capabilities could soon allow a new form of fundamental investment research technology that bridges the physical and digital worlds. For now let’s call it “parking lot arbitrage.”

Smart glasses loaded with investment analytics software visually registering — with a single sweep of the head — the kaleidoscopic colors of brands and logos layered upon our urban landscape and then parsing, processing and cross-referencing the images of associated publicly traded companies for evaluation might seem like something out of a Wall Street science fiction film. But it might soon become a reality.